Revolutionary AI Infrastructure

Infercom is powered by SambaNova's dataflow architecture — purpose-built for AI inference, delivering unprecedented performance and efficiency.

Up to 10x

Faster Inference

Up to 5x

Energy Efficient

Up to 25TB

Memory per Rack

Dataflow vs. GPU Architecture

Why purpose-built dataflow beats general-purpose GPUs for AI inference

Purpose-built for AI workloads, creating custom processing pipelines for entire computation graphs while minimizing data movement.

- ✓ Entire model resident in memory

- ✓ Data flows through operations without intermediate writes

- ✓ Operator fusion: hundreds of operations in single kernel

- ✓ Software-defined hardware optimizes for each workload

Traditional GPU

General-purpose design

General-purpose design requiring kernel-by-kernel execution creates bottlenecks for AI inference workloads.

- ✗ Kernel-by-kernel execution creates overhead

- ✗ Excessive data movement between processor and memory

- ✗ Memory bandwidth bottleneck limits performance

- ✗ Underutilization of compute resources

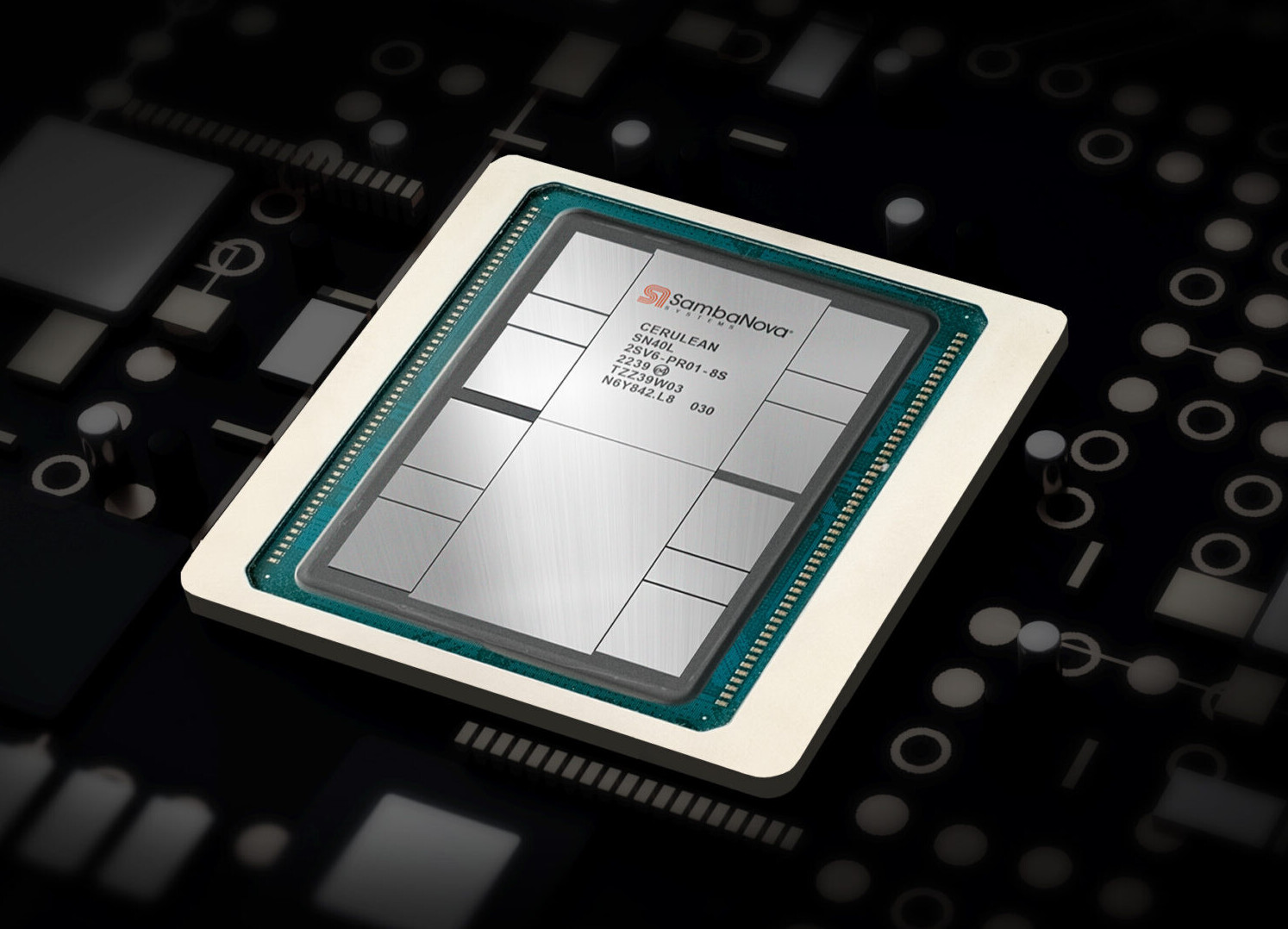

SN40L Reconfigurable Dataflow Unit

Built on TSMC's 5nm process with 1,040 compute cores per chip, delivering 638 BF16 TFLOPS per chip — 10.2 PetaFLOPS per rack.

SambaNova SN40L RDU — dual-die CoWoS package on TSMC 5nm

638

TFLOPS/Chip (BF16)

1,040

PCUs/Chip

10.2

PFLOPS/Rack

TSMC 5nm

Process

Three-Tier Memory per Chip

520MB

SRAM

Ultra-fast on-chip cache

64GB

HBM

High-bandwidth co-packaged memory

Up to 1.5TB

DDR

Off-package DIMM storage

Rack Configuration (SN40L-16)

16

RDU Chips

Up to 25TB

Total Memory

~10kW

Typical Power

Air

Cooled

World-Record Performance

Performance measured in output tokens per second. MiniMax and DeepSeek-V3.1 benchmarks from Infercom EU infrastructure. DeepSeek-R1 and gpt-oss from Artificial Analysis (SambaNova Cloud).

MiniMax M2.5

NEW — EU HostedHigh-performance multimodal model now hosted on Infercom's EU infrastructure. Independently measured at 400+ tokens/sec by Artificial Analysis.

EU Sovereign

DeepSeek-R1 671B

10x vs GPUThe world's largest reasoning model at unprecedented speed. Up to 10x faster than GPU-based providers.

671B params

DeepSeek-V3.1

EU Hosted128K context, full function calling, and JSON mode — one of Europe's most capable sovereign LLMs.

EU Sovereign

OpenAI's open-source 120B parameter model. Exceptional throughput for high-volume sovereign workloads.

EU Sovereign

Sustainable AI Infrastructure

Up to 5x better energy efficiency than GPU-based inference

Lower Power Consumption

Typical 10kW per rack versus GPU racks consuming 40–50kW+ for equivalent workloads. Dataflow architecture requires fewer chips, translating to dramatic power savings.

Smaller Footprint

Dramatically reduced physical space, simplified cooling, and lower total infrastructure costs.

Air-Cooled Design

No liquid cooling infrastructure required. Standard air cooling simplifies deployment, reduces maintenance complexity, and lowers operational overhead.

"Not all tokens are created equal. The real value lies not in measuring tokens generated, but in the quality of intelligence delivered per unit of energy consumed."

SambaNova — "Intelligence per Joule"Advanced Model Capabilities

Massive Model Support

Run large models with hundreds of billions of parameters on a single rack. Support for Composition of Experts (CoE) with 100+ expert models hosted simultaneously.

Long Context Windows

Handle up to 164K token context windows (MiniMax M2.5) and 128K on most other models. Massive memory capacity enables document analysis, code generation, and reasoning tasks.

Millisecond Model Switching

Multiple models resident in memory simultaneously with millisecond switching latency — orders of magnitude faster than GPU systems. Perfect for agentic AI and multi-model workflows.

European Infrastructure

Hosted in Equinix Munich 4 — Tier III+ certified, carrier-neutral datacenter

SambaNova rack infrastructure — air-cooled, 10kW per rack

Munich-Based Hosting

All data and processing remains within German borders under EU jurisdiction.

No US Jurisdiction

Protection from CLOUD Act, PATRIOT Act, and foreign intelligence access.

AI Act Ready

Infrastructure prepared for EU AI Act requirements and compliance.

Tier III+ Certified

99.982% uptime guarantee with redundant power and cooling systems.

Learn More About the Technology

Explore in-depth resources from SambaNova and independent sources

SambaNova Blog

Intelligence per Joule

Why energy efficiency is the new benchmark for AI infrastructure. Includes independent performance data.

Read moreSambaNova Blog

Dataflow Architecture for Inference

How dataflow eliminates GPU bottlenecks and enables efficient multi-model hosting at scale.

Read moreSambaNova Blog

Why SN40L Is Best for Inference

Full chip specifications, benchmark data, and architectural deep-dive into the SN40L RDU.

Read moreSambaNova Blog

SambaNova & Infercom Partnership

Building Germany's premier sovereign inference cloud — our partnership story in SambaNova's own words.

Read morearXiv

SN40L Architecture Paper

Peer-reviewed technical paper with full chip specifications, CoE benchmarks, and memory hierarchy details.

Read moreArtificial Analysis

Independent Performance Benchmarks

Third-party verified inference speed, throughput, and quality metrics across all SambaNova models.

Read more